VR Multicopter Teleoperation

Third-person VR control system for aerial robots with real-time SLAM

First-person-view (FPV) drone piloting suffers from limited spatial awareness - operators can't see the drone's relationship to nearby obstacles. This leads to collisions, especially in confined indoor environments.

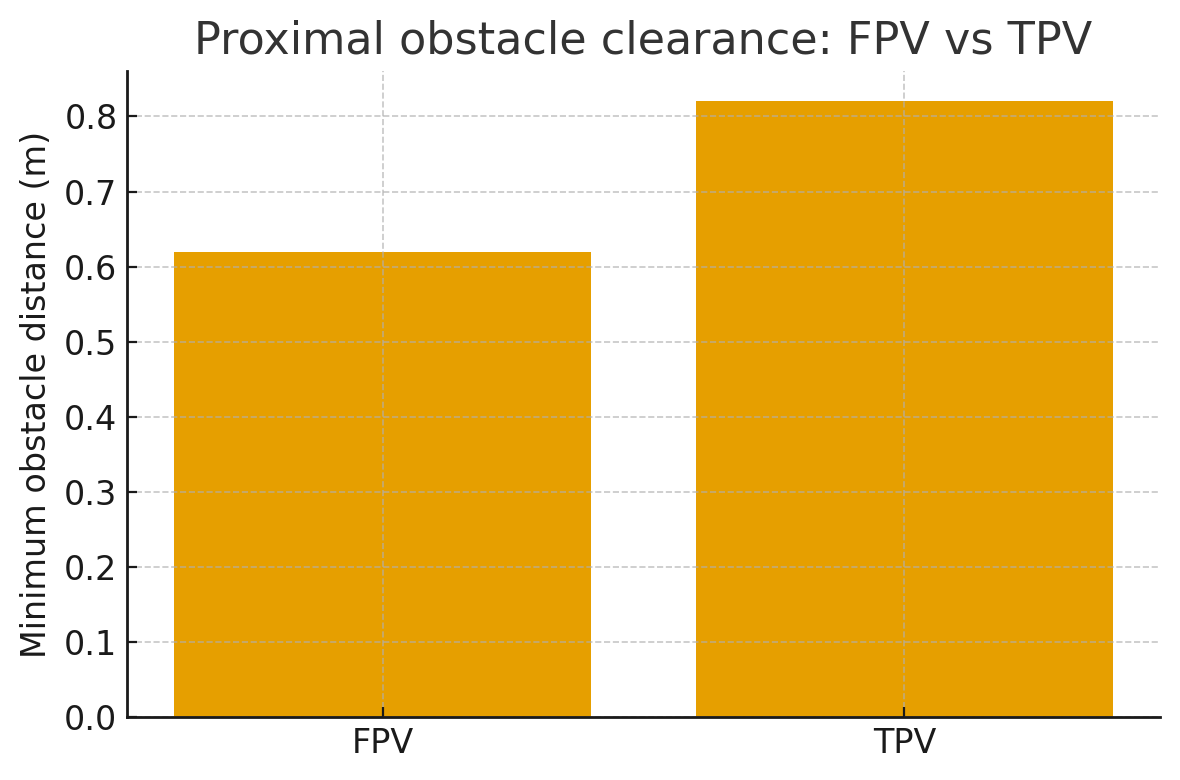

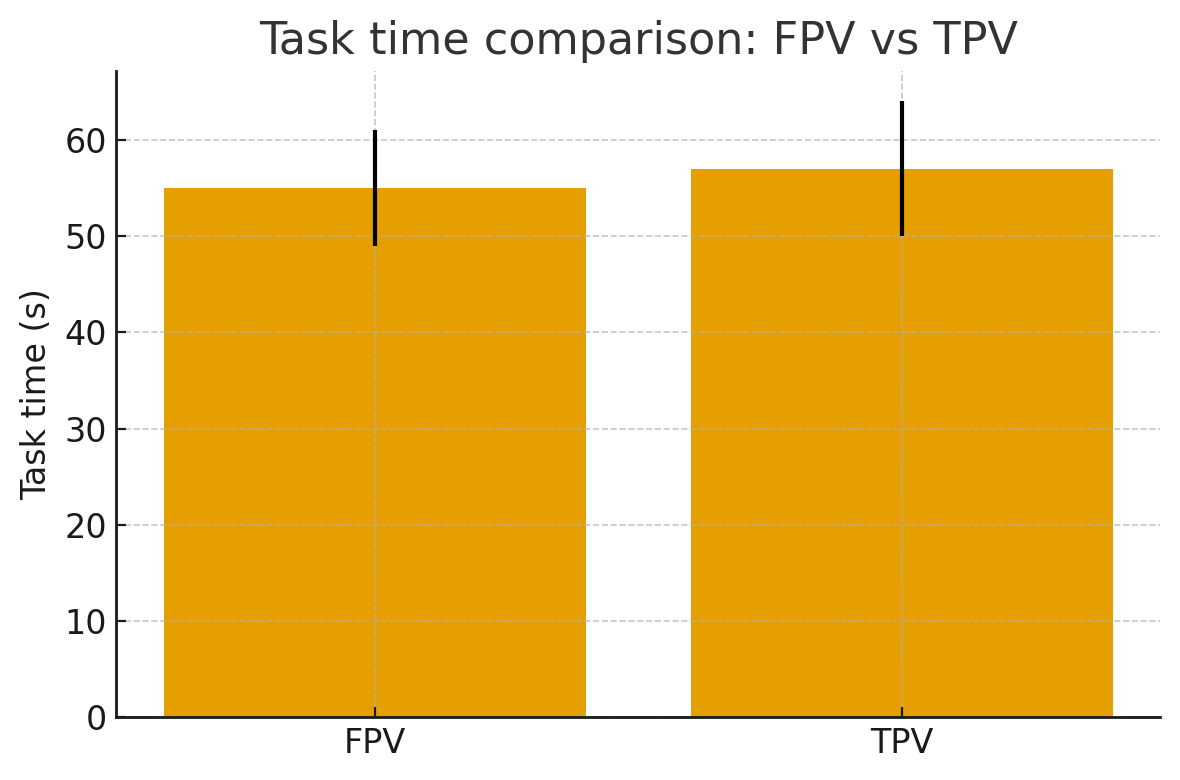

The question: can a third-person virtual reality perspective improve obstacle clearance without slowing the operator down?

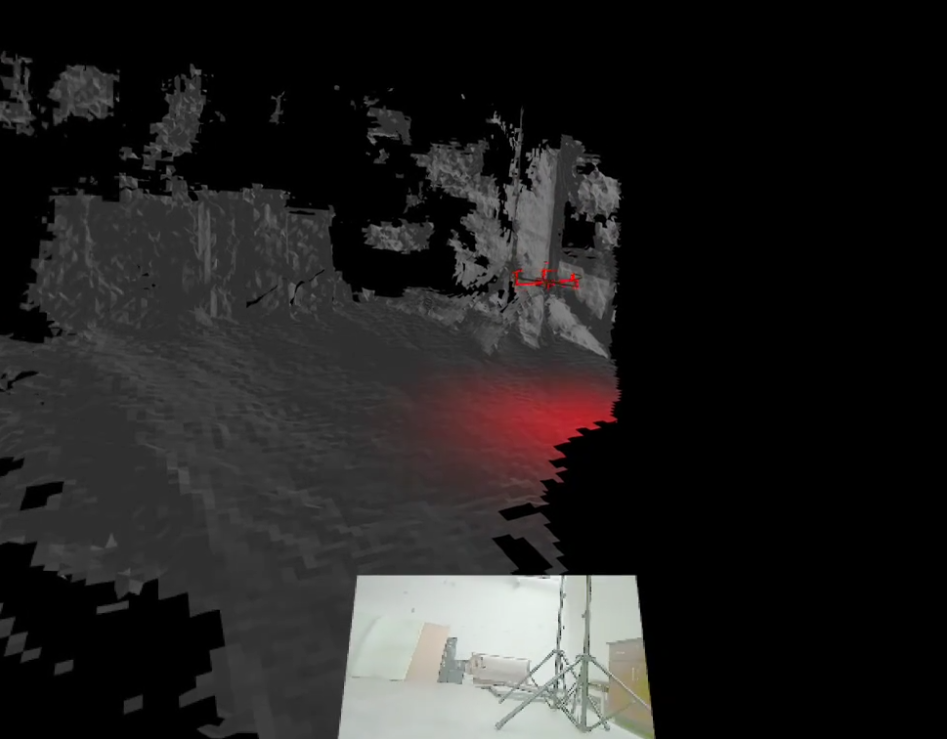

Real-time 3D virtual environment mirroring the physical space

Built a system that creates a real-time 3D virtual environment mirroring the physical space. The operator sees the drone from a third-person camera angle in VR, providing spatial context that FPV cannot.

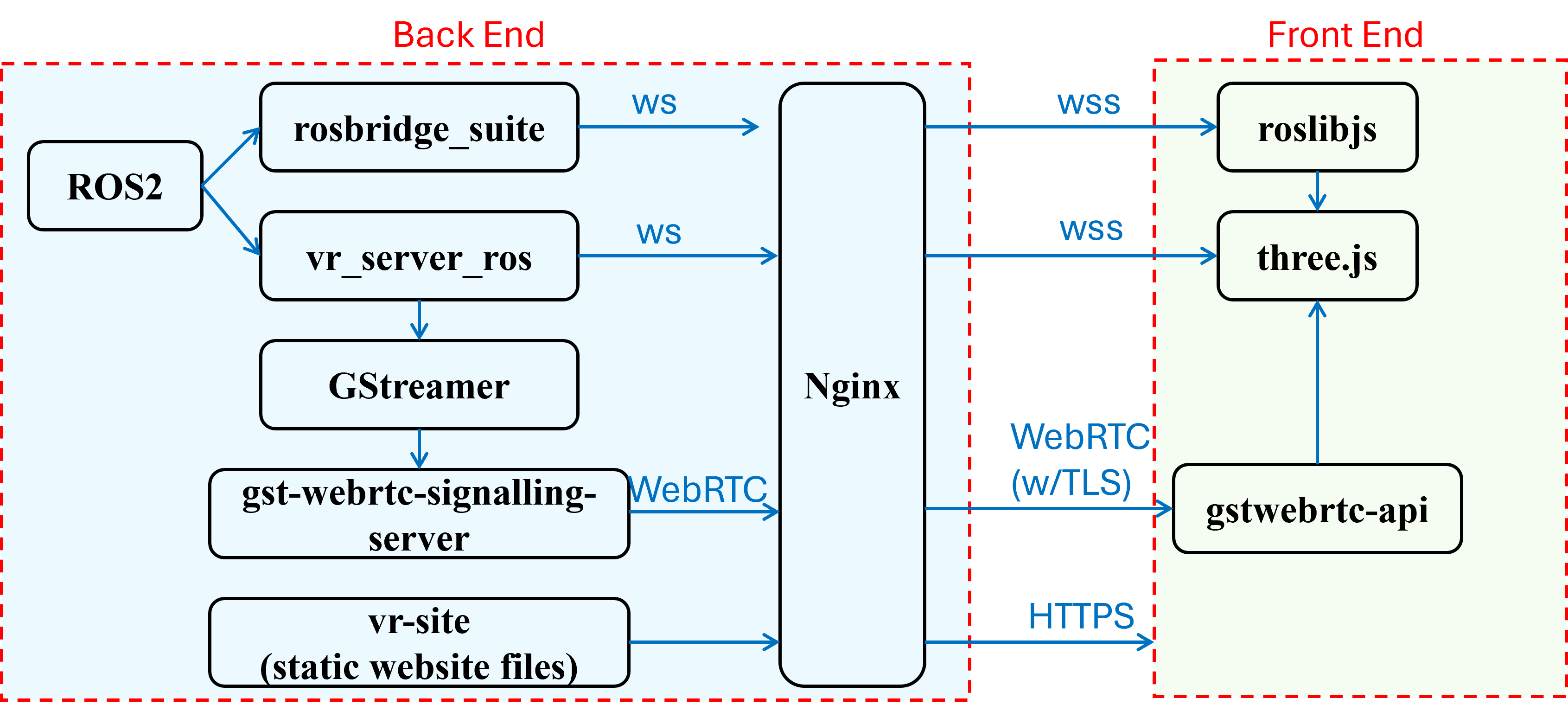

Architecture: ZED stereo camera → real-time SLAM → 3D environment reconstruction → ROS2 bridge → WebXR on Meta Quest 3. A low-latency WebXR-to-ROS2 control bridge runs on an NVIDIA Jetson Orin NX that handles SLAM, ROS2, and WebSocket streaming simultaneously.

Native VR applications require app store submissions, per-headset SDK integration, and build pipelines. WebXR runs in any headset browser - the same codebase works on Meta Quest 3, Quest Pro, and future devices without modification.

A fixed third-person camera forces operators to accept a single vantage point that may not suit their spatial reasoning style. An adjustable camera offset - configurable in real time via controller input - lets each operator dial in the viewing angle that feels most natural.

The Jetson Orin NX provides enough GPU compute to run ZED SDK SLAM, process point clouds, serve the WebSocket stream, and host the ROS2 control stack concurrently - tasks that would overwhelm a standard embedded board and require an external workstation if done separately.

System architecture: ZED camera → SLAM → ROS2 bridge → WebXR on Meta Quest 3

Key features

By the numbers

The 0.20m clearance improvement may sound modest, but for a micro aerial vehicle in a confined lab, it's the difference between a clean pass and a collision. What surprised me most was that operators didn't slow down - the spatial context from TPV let them plan paths more efficiently, compensating for the overhead of processing a richer visual stream.

The WebXR decision was controversial early on but proved right - it made the system headset-agnostic and dramatically simplified deployment compared to building native VR applications.